SECURING ARTIFICIAL INTELLIGENCE AGAINST INTELLIGENT ADVERSARIES: ROBUST LEARNING FRAMEWORKS FOR ADVERSARIAL, POISONING, AND MODEL EXTRACTION ATTACKS

DOI:

https://doi.org/10.71146/kjmr853Keywords:

adversarial attacks, artificial intelligence security, model extraction, poisoning attacks, robust learning frameworkAbstract

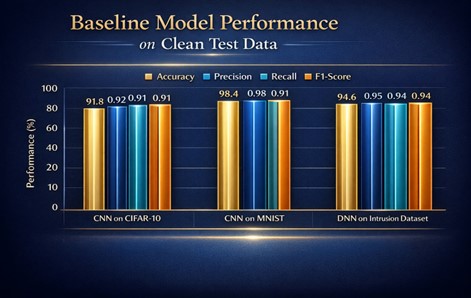

The rapid proliferation of artificial intelligence (AI) systems across critical domains has heightened concerns regarding their vulnerability to intelligent adversaries. This study evaluated the robustness of machine learning models against adversarial (evasion), poisoning, and model extraction attacks and proposed a multi-layered robust learning framework to mitigate these threats. Experimental results demonstrated that baseline models experienced accuracy degradation of up to 37.6% under PGD adversarial attacks, while poisoning contamination reduced performance by more than 23% and produced backdoor trigger success rates exceeding 92%. Model extraction fidelity reached 89.3%, indicating substantial intellectual property risks. The proposed framework integrated adversarial training, anomaly-based data sanitization, and privacy-preserving output perturbation mechanisms. Following implementation, adversarial robustness improved by up to 26.3%, poisoning attack success rates declined below 13%, and extraction fidelity decreased by 24.2%. Importantly, these improvements were achieved with less than 3% reduction in clean-data accuracy, confirming that enhanced security did not significantly compromise predictive utility. Statistical analysis indicated that robustness improvements were significant at p < 0.05 across all attack categories. The findings emphasized that defense-in-depth architectures provided superior resilience compared to isolated mitigation techniques. The study contributed to secure and trustworthy AI development by presenting a scalable framework capable of addressing multi-stage intelligent adversarial threats while maintaining operational performance stability.

Downloads

Downloads

Published

Issue

Section

Categories

License

Copyright (c) 2026 Asif Ahmad, Khair Muhammad Saraz, Imran Khan, Shadia Saad Baloch, Amber Baig, Ghulam Nabi (Author)

This work is licensed under a Creative Commons Attribution 4.0 International License.