OPERATIONAL ANDROID MALWARE FILTERING: CALIBRATED PROBABILITIES AND DISTRIBUTION-FREE GUARANTEES

DOI:

https://doi.org/10.71146/kjmr778Keywords:

Android, Android malware detection, Android Security, Malware Detection, malware classifier, probability , calibration , conformal prediction, selective prediction, reliability absentationAbstract

Objectives— We establish and rigorously evaluate an Android malware detector that optimizes not only discrimination (how well samples are ranked) but also reliability: (i) probabilities that correspond to empirical risks and (ii) explicit, distribution free uncertainty quantification. Our goals are to (1) benchmark strong tabular baselines on a canonical CSV corpus; (2) calibrate scores so a value like 0.9 aligns with “roughly 90% malicious”; and (3) add inductive conformal prediction (ICP) to provide finite-sample coverage guarantees and a principled abstain option.

Methodology— The pipeline: (i) ingest and sanitize a dataset of 15,036 applications with static features; (ii) encode mixed datatypes via one-hot and factorization; (iii) train Logistic Regression (LR), Random Forest (RF), and XGBoost (GBDT); (iv) report ROC–AUC, PR–AUC, F1, Accuracy, Brier score; (v) apply post hoc calibration (Platt, Isotonic) and compute Expected Calibration Error (ECE) with reliability diagrams including Wilson intervals; and (vi) deploy ICP to return prediction sets achieving target error α ∈ {0.10,0.05,0.01}, yielding selective automation.

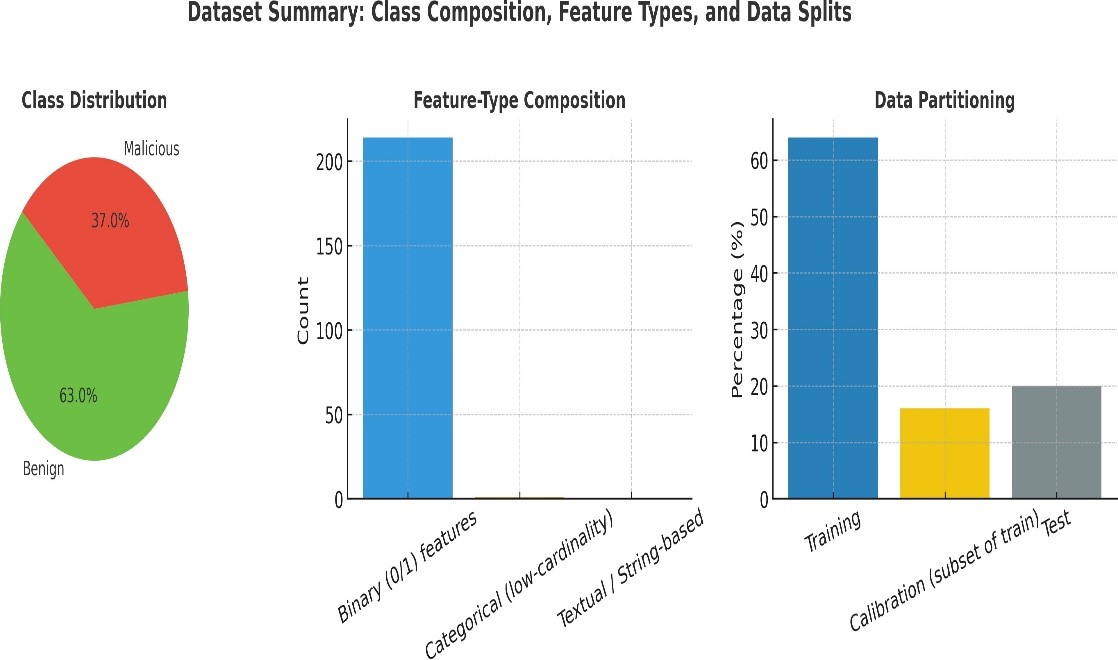

Dataset— A text-only (CSV) Android corpus of static attributes (manifest/permissions/API-like proxies) with a binary label (benign/malicious). Preprocessing drops identifiers, coerces types, imputes missing values, applies de-duplication, and uses an 80/20 stratified split with a 20% calibration carve-out from training (effective 64/16/20 train/cal/test).

Results— RF/GBDT provide the best ranking. Calibration consistently lowers ECE/Brier and aligns reliability curves with the identity line; we visualize the effect for RF. ICP tracks the nominal target coverage closely with average set size near one, indicating selective abstentions. We include a temporal slice analysis to illustrate robustness under modest distribution shift, plus an operational thresholding example linking (α,t) to analyst load.

Applications— Calibrated probabilities support auditable thresholds in app stores and enterprise gateways; ICP provides a knob for predictable abstentions under finite-sample guarantees. The approach is model-agnostic, simple to reproduce, and easy to retrofit in static or hybrid pipelines.

Availability— Scripts for preprocessing, calibration, ICP, metric computation, and figure generation, along with CSV/PNG artifacts referenced in the paper, are provided in the artifact bundle.

Downloads

Downloads

Published

Issue

Section

License

Copyright (c) 2025 Basit Raza Dharejo, Prof. Dr. Abdullah Maitlo, Zahid Hussain Shar, Iqra Hyder (Author)

This work is licensed under a Creative Commons Attribution 4.0 International License.